Listening to Replit CEO Amjad Masad discussing the topic of "enterprise agent slop," I find myself wondering: if Claude Code and Cowork prove that high-quality agents are possible, why are most enterprise implementations still failing?

The "Enterprise Grade" Gap

Insights from the video suggest that most production enterprise agents fall into two categories: AI coding and customer care. It seems companies are either solving in-house software engineering challenges or deploying agents to level up support.

Why these scenarios and not others?

At my workplace, I am designing and building a service called the Engineering Copilot. Our mission is to provide expert-level agents for colleagues in different R&D roles. Think about an Architect Agent, a Troubleshooter, a Tech Writer, or a System Configurator. Each targets real-life engineering activities backed by ontologies built from diverse sources of Telco platform documentation.

Considering all these types, we identified customer care scenarios as the most impactful. We selected an expert-level Troubleshooter Agent designed to measurably improve efficiency across Level 1, 2, and 3 support. This is now our highest priority delivery.

Imagine an incoming incident managed in Jira containing tons of technical details: log files with time-series data, email threads, office docs, and a huge corpus of product-specific technical documentation stored in the DITA standard.

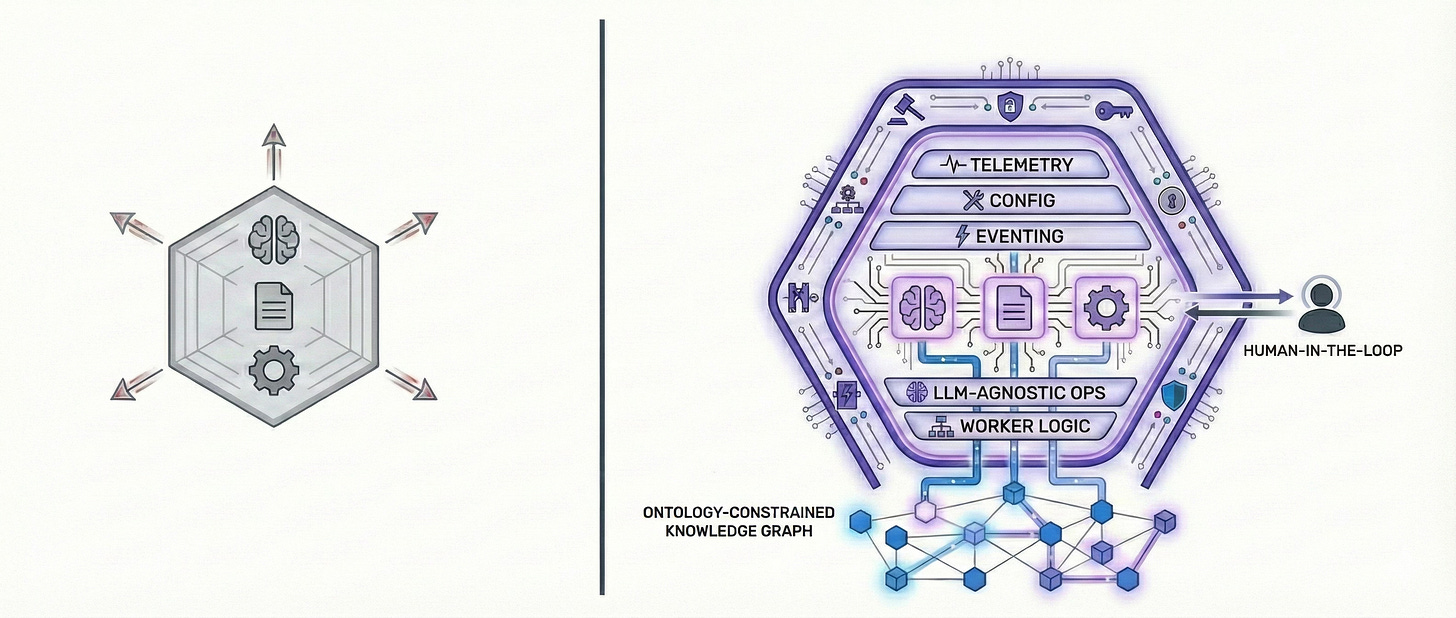

We are building the capability to trigger a human-in-the-loop agent that can perform in-depth root cause analysis and provide short- and long-term mitigation plans—all based on a deterministic knowledge graph retrieval service.

This is not naive RAG logic; it is an agent that can analyze, plan, execute, and reflect like any R&D expert in our organization.

To build it, the AI engineering team needs to invent beyond basic LLM tool-calling capabilities. We need to make it truly “enterprise grade.” The architecture must contain layers for extensible telemetry, centralized configuration management, internal eventing, LLM-agnostic operations, and a sophisticated worker logic that can handle both human-in-the-loop and autonomous operations.

All of this connects to a GraphRAG knowledge retrieval component that understands the agent’s “world view” via ontology TBoxes and is able to run depth- and breadth-first graph traversals based on a smart classification of user intent.

The interview also highlights the importance of memory management, such as context compaction. These features are crucial, alongside short- and long-term memory capture and recall logic.

And these are just the basics—I haven’t even mentioned agent governance, policy management, Role-Based Access Control (RBAC), or safe execution with failure management, compensation actions, and circuit breaker logic.

Welcome to the challenging world of enterprise architectures, ontology modeling, natural language query engines for graphs, and semantic layer development.

Without the right architecture, attempts to deliver on the promise of the “agentic age” will fail. There is nothing new in software projects failing; what is special here is that an AI engineering team needs to develop many new skills and competencies on top of existing SWE expertise.

My Take

We shouldn’t target generalized agents; we must focus on specialized agents with true enterprise-grade features.

The value of agents relies on human expertise; this is unavoidable.

Agents should focus on automation for domain experts to deliver results.

Full autonomy—including self-learning, self-healing, and self-optimization—should come later.

Watch the video here: Replit CEO Amjad Masad on AI Agents Podcast